How to Improve Data Quality in Financial Services

Modern financial institutions run on data. When that data is incomplete, inconsistent, or late, risk models fail, customer journeys break, and regulators start asking difficult questions. Improving data quality should therefore become a core capability for safely growing the business.

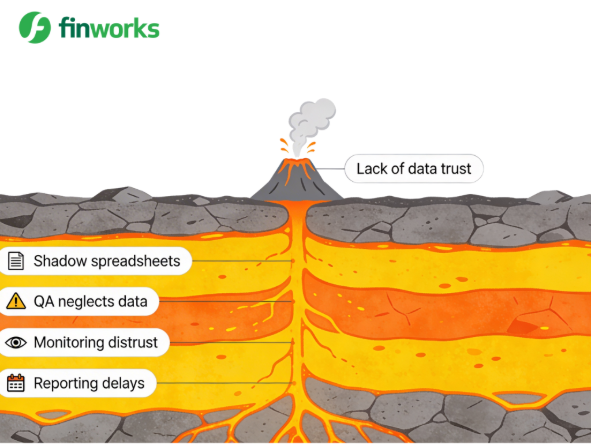

The real problem isn't the data — it's trust

In many firms, data quality is really a trust problem. Teams do not trust the numbers they receive, so they build their own shadow spreadsheets, recalculate metrics, or delay decisions while they double-check the data. That behaviour slowly erodes confidence in central systems and makes every reporting cycle harder than the last.

Think of it like a volcano. At the surface is the visible eruption: lack of data trust in reports and dashboards. Beneath the surface are the deeper causes: shadow spreadsheets, QA processes that neglect data, monitoring that nobody believes, and recurring reporting delays. Fix the smoke without cooling the lava and you'll be back here next quarter.

1. Understand the regulatory bar for quality

Financial services operate under some of the strictest data expectations of any industry. Frameworks like BCBS 239, Solvency II, MiFID II and local prudential rules all demand that risk and finance data be accurate, complete, timely, consistent and traceable across systems. Regulators increasingly expect firms to prove how they meet these standards, not just assert that they do.

For data leaders, this means linking every quality initiative back to these dimensions. When you define accuracy checks, target reductions in missing fields or improve timeliness of feeds into risk and finance, you are not just tidying up data, you are directly reducing regulatory, operational and reputational risk.

2. Fix the foundations: governance, standards, ownershipHigh-quality data starts with clear foundations. A practical governance framework defines who owns each critical data element, what “good” looks like and how quality will be measured. In a bank or asset manager this includes ownership for customer, product, trade, position and reference data, spanning both business and technology.

Common data standards are the next layer. Shared taxonomies, naming conventions and formats for codes, dates, currencies and counterparties ensure that “the same thing” really is the same thing wherever it appears.

Finally, data stewardship makes governance real: named individuals who can resolve issues, agree changes and stop poor-quality data at source, rather than pushing the problem into downstream reconciliation teams.

3. Move controls upstream: validate at point of captureMany institutions still discover data issues during end‑of‑month reporting or, worse, after a regulator queries a discrepancy. At that point the cost of remediation is high and the room for manoeuvre is low. A more effective pattern is to move controls as far upstream as possible, validating data at the point of capture.

Concretely, that means using mandatory fields with sensible defaults, format and range checks, reference data lookups for customer and product identifiers, and workflow driven approvals for manual overrides. For complex products, trade capture screens should guide users through valid combinations rather than allowing free text entries that have to be interpreted later. If you prevent bad data entering the ecosystem, you reduce downstream noise and shorten close cycles.

4. Use automation and AI carefully, but decisively

Manual, spreadsheet-based checks cannot keep pace with the volume and variety of financial data. Automation is essential. Modern data quality and observability tools can run rules at scale, detect anomalies and provide dashboards that highlight where things are deteriorating before they become incidents.AI and machine learning add another layer of capability. They can spot patterns humans might miss, such as subtle drifts in data distributions, unusual correlations or recurring break types in specific systems. The key is to treat these models as decision support, not decisionmakers: they surface signals, but governance decides how to act. Combining automated checks with clear ownership allows you to respond quickly without losing control.

5. Turn monitoring into a continuous disciplineData quality is not a one-off project; it is a continuous discipline. Leading organisations define a set of key data elements, those fields that drive risk, capital, customer outcomes and regulatory reporting, and attach explicit service levels to them. Examples of practical service levels include: no more than X% of trades with a missing counterparty identifier · all positions reconciled by 09:00 · customer risk ratings refreshed within Y days of new information arriving.

Dashboards then track performance against these expectations by business line, system and data owner. Trends matter as much as point‑in‑time metrics: a gradual decline in timeliness or a rise in manual adjustments is an early warning signal that something has changed upstream. Regular reviews turn these insights into actions, so you fix processes, not just individual records.

6. Communicate value in business, not technical, terms

Even the best-designed data quality programme will stall if it's framed in technical language. Senior leaders care about outcomes: fewer regulatory findings, lower capital add-ons, faster product launches, better customer experiences and reduced operating costs. Your business case needs to connect specific data improvements to those outcomes directly.- Eliminate shadow spreadsheets in capital planning — reduces model risk and accelerates sign-off.

- Improve customer data completeness — reduces failed outbound contacts and enables more personalised journeys.

- Tighten reference data controls in trading systems — reduces breaks, manual work and operational losses.

Bringing it all together

Improving data quality in financial services is not about a single tool or a one-off clean-up. It is about building the controls, ownership and insight that make trusted data the default, not the exception. When governance is clear, controls sit at the point of capture, and monitoring is continuous, trust in the numbers follows naturally.

Our platform combines configurable workflows, strong data governance and rich audit trails, so you can embed quality into everyday processes rather than bolting it on at reporting time. We help financial institutions move away from shadow spreadsheets and manual reconciliations towards repeatable, transparent processes that stand up to regulatory scrutiny.

If you want to strengthen data quality for risk, finance or regulatory reporting, we can map one critical process, surface the key data issues and show how a modern platform addresses them.

Get in touch to see how Finworks can help you rebuild trust in your data.